|

Xiao-Lei Li | 李晓磊

I'm a Ph.D. candidate of CSCG Lab at Tsinghua University in Beijing, China, where I do research in 3D Vision/AIGC/Computer Graphics under the supervision of Prof. Shi-Min Hu. Prior to this, I completed my Master's degree in the ITML Lab at Tsinghua University, advised by Shu-Tao Xia. I received my bachelor's degree from the Physics Department at Jilin University. I spent a wonderful time as a research intern in the Visual Computing Research Group at Microsoft Research Asia, working with Dr. Xin Tong and Dr. Jiaolong Yang. I was also a visiting student in the IIIS Department at Tsinghua University, supervised by Prof. Li Yi. |

|

News

- [2025-08] 📍 Attended SIGGRAPH 2025 in Vancouver, Canada.

- [2025-06] 🎉 Our paper SDLKF accepted to SMI 2025 and published on Computers & Graphics(CG).

- [2025-03] 🎉 Our paper RELATE3D accepted to SIGGRAPH 2025.

- [2024-12] 📍 Attended SIGGRAPH Asia 2024 in Tokyo, Japan.

- [2024-07] 🎉 Our paper DIScene accepted to SIGGRAPH Asia 2024.

Research

My primary research interest lies in 3D/4D Artificial Intelligence Generated Content (AIGC), which is the joint field of computer vision and graphics. Now I am actively exploring how video and 3D interact in latent space, especially the camera and lighting control in world model techniques. Some papers are highlighted.

|

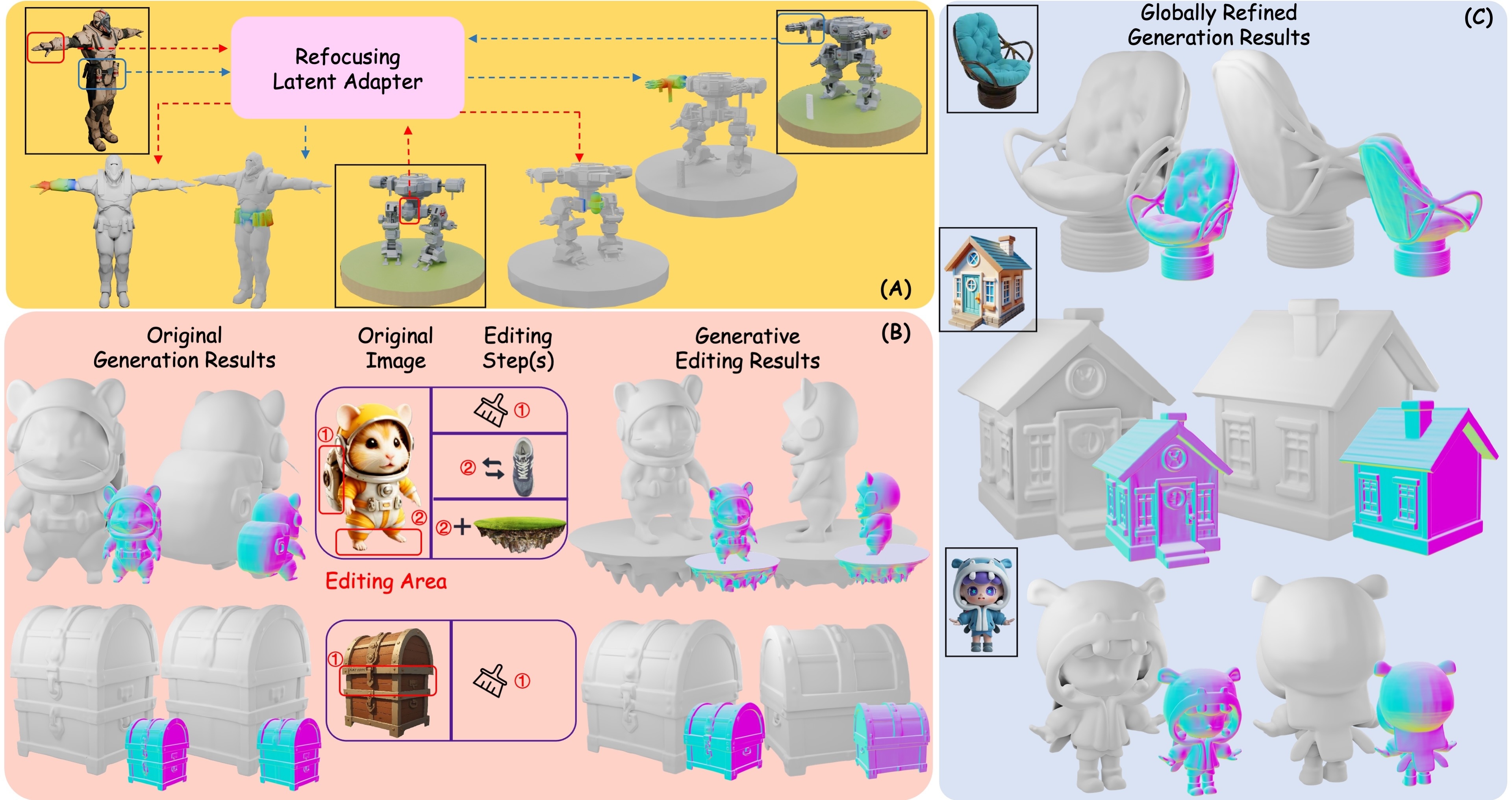

RELATE3D: REfocusing Latent Adapter for Targeted local Enhancement and Editing in 3D Generation

Xiao-Lei Li, Hao-Xiang Chen, Yanni Zhang, Kai Ma, Alan Zhao, Tai-Jiang Mu, Hao-Xiang Guo, Ran Zhang SIGGRAPH, 2025 project page / paper link We explore and reveal the characteristics of the native 3D latent space for 3D generation, make it decomposable and low-rank, thereby enabling efficient learning for multimodal local alignment, achieving precise local enhancement and part-level editing of 3D geometry. |

|

|

DIScene: Object Decoupling and Interaction Modeling for Complex Scene Generation

Xiao-Lei Li, Haodong Li, Hao-Xiang Chen, Tai-Jiang Mu, Shi-Min Hu SIGGRAPH ASIA, 2024 project page / paper link We propose DIScene, representing the entire 3D scene with a learnable structured scene graph, to achieve 3D scene generation from single image/text with style-consistent and decoupled objects as well as clearly modeled interactions. |

|

|

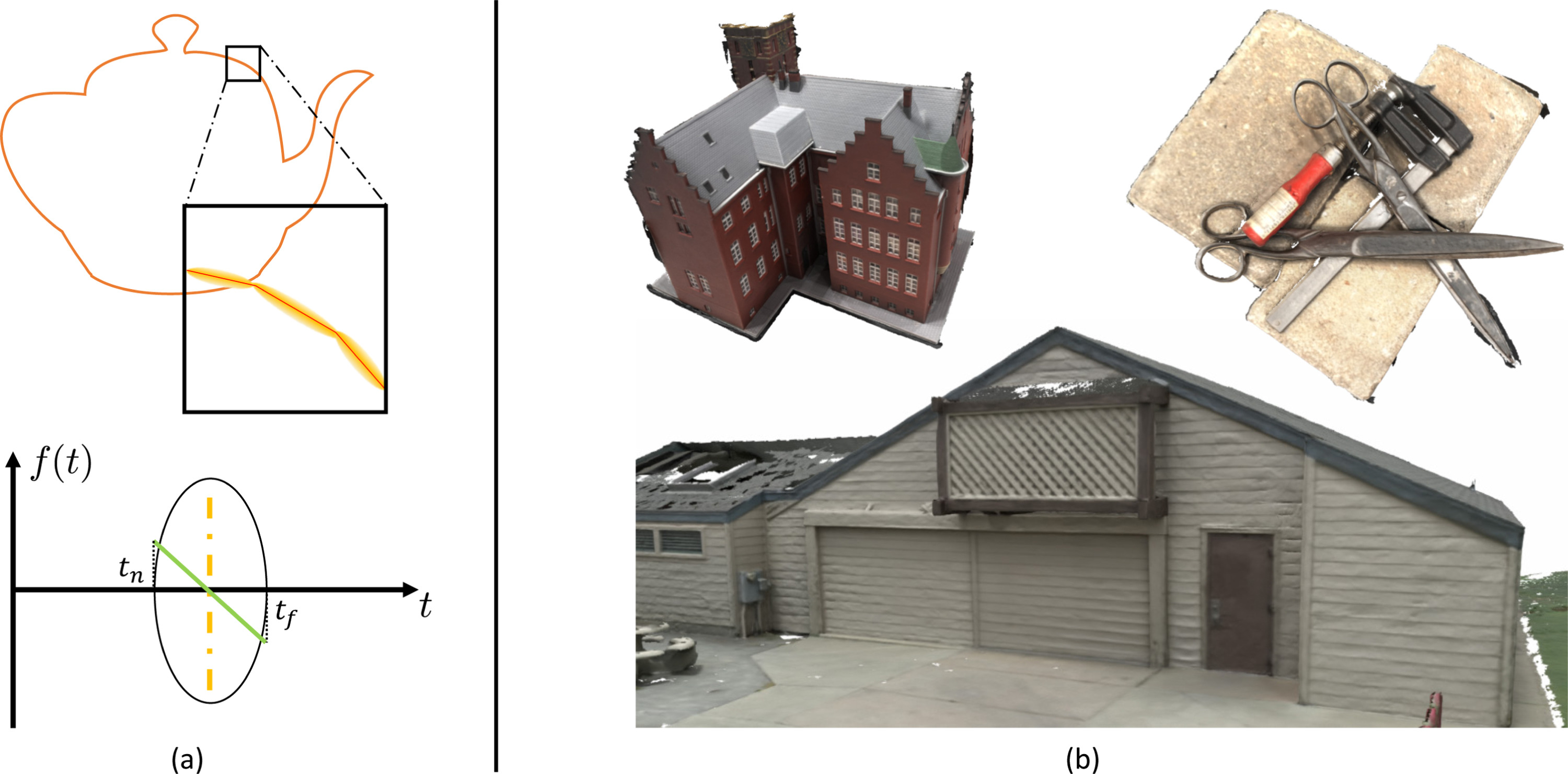

SDLKF: Signed Distance Linear Kernel Function for surface reconstruction

Hao-Xiang Chen*, Xiao-Lei Li*, Tai-Jiang Mu, Qunce Xu, Shi-Min Hu Computer & Graphics, 2025 paper link We present Signed Distance Linear Kernel Function (SDLFK), an explicit representation for surface reconstruction that enables closed-form volume rendering with linear kernels. SDLFK seamlessly balances optimization-friendly soft surfaces and precise hard reconstructions, achieving fast rendering and fine geometric detail. |